The past two years have been an exciting time at Stack Overflow. While we still run stackoverflow.com in an on-premises datacenter, we have taken on the journey of migrating Stack Overflow for Teams to Microsoft Azure.

Stack Overflow for Teams is our private SaaS product for internal knowledge sharing and collaboration within organizations. It’s basically your own private stackoverflow.com. Being a SaaS product, it’s very well suited to run in the cloud with flexible scaling and abstraction of the hardware we run on. But because of its origin as an outgrowth of Stack Overflow, it began its life using the same infrastructure and hardware as the public sites.

This two-part blog series will discuss our cloud journey, the decisions we made, and the things we learned while moving Stack Overflow for Teams to Azure.

Why go to the cloud?

Since the beginning, Stack Overflow and its sites ran on physical hardware designed to maximize the efficient use of resources so that we could run on the least amount of servers possible. We were pretty proud of this: one of the original servers hung on the wall of our New York City office long after it stopped working.

But once we saw our Stack Overflow for Teams business take off, running everything on a set number of machines was no longer feasible. We want to move away from having engineers need to physically go to our data center in order to address hardware issues and upgrade hardware. Our engineers should focus on what adds the most value to our customers, and that’s not maintaining a physical infrastructure.

Another benefit that cloud migration brings us is the ability to maintain security compliance frameworks such as SOC 2. Azure makes this a lot easier: their data centers maintain multiple compliance attestations and certifications, and their tooling helps keep our resources compliant. We went through our first SOC 2 process in 2020, and it can be time consuming. Azure would simplify a lot of this.

An additional benefit is that with virtual infrastructure, Azure makes it a lot easier to spin up additional ephemeral environments where we can test new features and infrastructure changes without disrupting other developers.

So how did you do it?

Three years ago, we came up with our plan to split our Stack Overflow for Teams product and move just the Business tier into Azure. This would mean getting SOC 2 Type II accreditation for our Business customers as quickly as possible while keeping our Free and Basic customers on-premises and making the migration a future problem.

As we executed this plan, we found out that it was incredibly difficult to split our product across two locations without severely impacting the user experience. Suddenly, the user needed to know to which environment their account was linked when trying to sign in. While we could build something to help with logins, this split between Business and Basic/Free environments proved fatal when it came to integrations (Slack, Jira, Microsoft Teams) since we can't control their app installation process without creating two separate apps, which the app stores didn’t allow.

After working on this path for almost a year, we decided to pivot to a new plan: move all three tiers of Teams and all existing customers to Azure all at once.

V2 consisted of several phases:

- Phase I: Move Stack Overflow for Teams from stackoverflow.com to stackoverflowteams.com

- Phase II: Decouple Teams and stackoverflow.com infrastructure within the data center

- Phase III: Build a cloud environment in Azure as a read-only replica while the datacenter remains as primary

- Phase IV: Switch the Azure environment to be the primary environment customers use

- Phase V: Remove the link to the on-premises datacenter

In this blog post we will discuss phase I. The second post will cover the other phases.

A multi-tenant Stack Exchange Network

When we started Teams six years ago, it was part of stackoverflow.com. Our company is known for our Stack Exchange Network and we wanted to give developers a familiar feeling and integrate with their daily usage of stackoverflow.com. Naturally, it made sense to let Teams users access their private sites from the sidebar of the public site.

Now to understand how we built Teams, you first need to know how we architected the Stack Exchange Network. You may be most familiar with stackoverflow.com, but if you look at https://stackexchange.com/sites, there is a huge list of sites (173 at last count) that all use the same Q&A foundation that we originally built for stackoverflow.com.

This foundation is multi-tenant. We have a central SQL Server database named “Sites” that contains data shared across the network. Most important for this discussion: the Sites database contains a list of network sites. Each network site then has its own content database that contains all the users, posts, votes, and other data for that specific site. All of this data belongs to publically accessible sites, so the controls on them were pretty simple and uniform.

Each site has a host address such as stackoverflow.com, superuser.com, or cooking.stackexchange.com. Whenever a request comes into our app, we inspect the host and see if that matches one of our known network sites. That's why you'll see the following when you go to a non-existing website such as https://idontexist.stackexchange.com/:

Teams adds another level of multi-tenancy where we have a site (the parent) that hosts Teams (the children). In the past when you would hit stackoverflow.com/c/yourteam, the first layer of multi-tenancy through the Sites database would bring you to stackoverflow.com and then the team name was used to find the content of your team.

Functionally, this gave us what we needed to make it work, but because this was private customer data instead of public site data, we also needed to think about securing this data.

A secure data center

Historically, because we only hosted publicly available data, engineers had a lot of permissions to access the servers and databases to troubleshoot issues. For example, at our scale, we sometimes have performance issues that we can most easily solve by connecting to a machine and creating a memory dump.

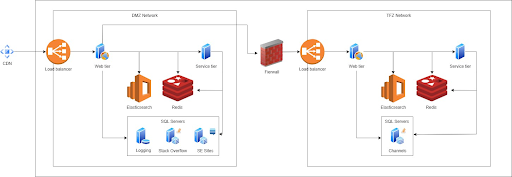

This wouldn't work for our Teams product. Teams contains private customer data that we as engineers should never be able to access. So to make sure that we can secure customer data, we had to make some changes to the data center as shown in the following diagram:

On the left side, you see our DMZ. This is what we've always had before launching Teams. The DMZ is where your request as a user comes in, the Sites database is located, and all the content databases for the different Stack Exchange sites live.

Now if you hit https://stackoverflow.com/c/my-team, your request gets intercepted and forwarded to the right side of the diagram: the TFZ (teams firewall zone or teams fun zone depending on who you ask). The TFZ is fully locked down. Engineers don't have access outside of documented break-the-glass situations and customer data cannot be queried.

This does mean that although all the Teams databases are inside the TFZ, the Sites database is shared between Teams and Stack Overflow.

Getting Teams into Azure meant that we had to separate Teams from Public on a functional level all the way down to the hardware. Splitting off Teams to its own hardware and databases was a complex project. What scared us most was that certain steps were big bang steps without a possibility to roll back. We had to get things right or fix forward and that added a lot of risk.

That’s why we looked at making the individual phases smaller and less risky. We decided the first thing we could do was move Teams from stackoverflow.com to its own domain: stackoverflowteams.com.

Moving to stackoverflowteams.com

We can already run multiple sites from our application and sites can have a parent. We came up with the idea of making Teams a separate site in the Stack Exchange network with some special settings and make all Teams a child of this new site. The new parent site will have its own domain: stackoverflowteams.com. We would build out this new site in small steps and figure out all the user experience things we had to change. This way, we could decouple the infrastructure changes from the user experience changes, making things less complicated and less risky—and eliminate some of those big bang steps altogether.

We added a new entry in the Sites DB for our new 'Stack Overflow for Teams' site and added a new site type, TeamsShellSite, that we could use in code to differentiate between a regular Stack Exchange site such as stackoverflow.com and our new site: stackoverflowteams.com.

The TeamsShellSite became the new parent for all individual Teams. If you go to stackoverflowteams.com while not logged in, you will see a welcome page with some information and the option to create a free team and log in. This is served from the TeamsShellSite.

If you do have a team and go to stackoverflowteams.com/c/your-team, your request still hits the DMZ, the base host address is mapped to the new TeamsShellSite record, and your requests get forwarded to the TFZ.

Removing stackoverflow.com as a parent required a lot of changes. Anything previously hosted on stackoverflow.com now had to be handled by the TeamsShellSite, including the account page, navigation between Teams, third-party integrations, and customer configurations for SSO and SCIM. We also had to make sure to have redirects in place so customers could access a Team by both stackoverflow.com/c/your-team and stackoverflowteams.com/c/your-team.

This was especially important for our authentication and ChatOps integrations. A lot of our customers have set up integrations like SSO, SCIM, Jira, Slack, and Microsoft Teams. These integrations point to stackoverflow.com, and we wanted to make sure we wouldn't break them when we migrated a Team to stackoverflowteams.com. We also wanted to point our integrations to a new subdomain: integrations.stackoverflowteams.com to decouple them from our host domain.

As you can imagine, this took a lot of testing to make sure we covered all edge cases. For example, until we updated all our integrations to point to integrations.stackoverflowteams.com, we added redirects from stackoverflow.com. However, it turned out the Jira integration installation page didn’t work with redirects due to an embedded iframe Jira uses. We had to work around that limitation by replacing host headers instead of redirecting for that specific page. All these changes were the bulk of the work for moving Teams away from Stack Overflow as a parent site and to a new domain.

Once we had the code changes to support the TeamsShellSite in place, we could start moving Teams from stackoverflow.com to stackoverflowteams.com by changing the parent of a site and updating the cache. We created some internal helpers to move a single team or a batch of Teams to a new parent to make this process easy and painless. The big advantage was that we could easily move a team but also move it back if something went wrong. This made all the changes we had to do less scary since we knew it wasn’t a big bang change without a rollback.

We started with migrating our own internal Teams—if we were going to break something, we should be the ones to feel it. Once we had our own Teams working, we started migrating customers. We first moved all small, free Teams. Then our Basic tier and finally our Business tier.

We ran into an issue with caching. If a team was moved from stackoverflow.com to stackoverflowteams.com and a user tried to access it on stackoverflow.com, our code looked at the cached data, couldn’t find the site, reloaded all sites in the cache only to then figure out it should redirect. That happened every time. Now reloading all the cached sites is a very expensive operation so yes, we might have taken the site down once or twice but that only added to all the excitement.

In December 2022, we completed this first phase after almost a year of work. The customer-facing changes were now finished, and all customers received communication and support for the changes they had to make. We were now successfully running all of Stack Overflow for Teams on its own domain!

Now we could move on to Phase II: Removing the dependency on the shared Sites database and removing the dependency we have on the DMZ.