Although the name “DeveloperWeek” may be a bit of a misnomer—it was, in fact, less than a week—DeveloperWeek 2026 was, in every other way, exactly as one might expect: an event teeming with the latest and greatest tooling, workflows, and ideas for the technical superheroes keeping all of our favorite products, websites, and infrastructure alive. While no big technological announcements—or cars—were dropped at this event à la re:Invent, the smaller, more intimate affair certainly centered around the actual everyday work of developers, who need to get into the nitty gritty of their codebases and tools to someday achieve that prophesied 10x development. And at the heart of the conversations at DeveloperWeek 2026 is the question we’ve all been asking: are AI tools actually good?

AI that’s actually usable means giving humans agency

One thing that seems to be the rock in everyone’s shoe—at least at DeveloperWeek—is the usability of AI tools. I found this to be an insight of particular interest from one of my sessions: many AI tools aren’t actually created to be highly usable—most of the time they are created with efficiency and speed in mind, not how easy it is for people to use it. But it’s pretty important for people to want to use your AI tool if you’re going to build an AI tool.

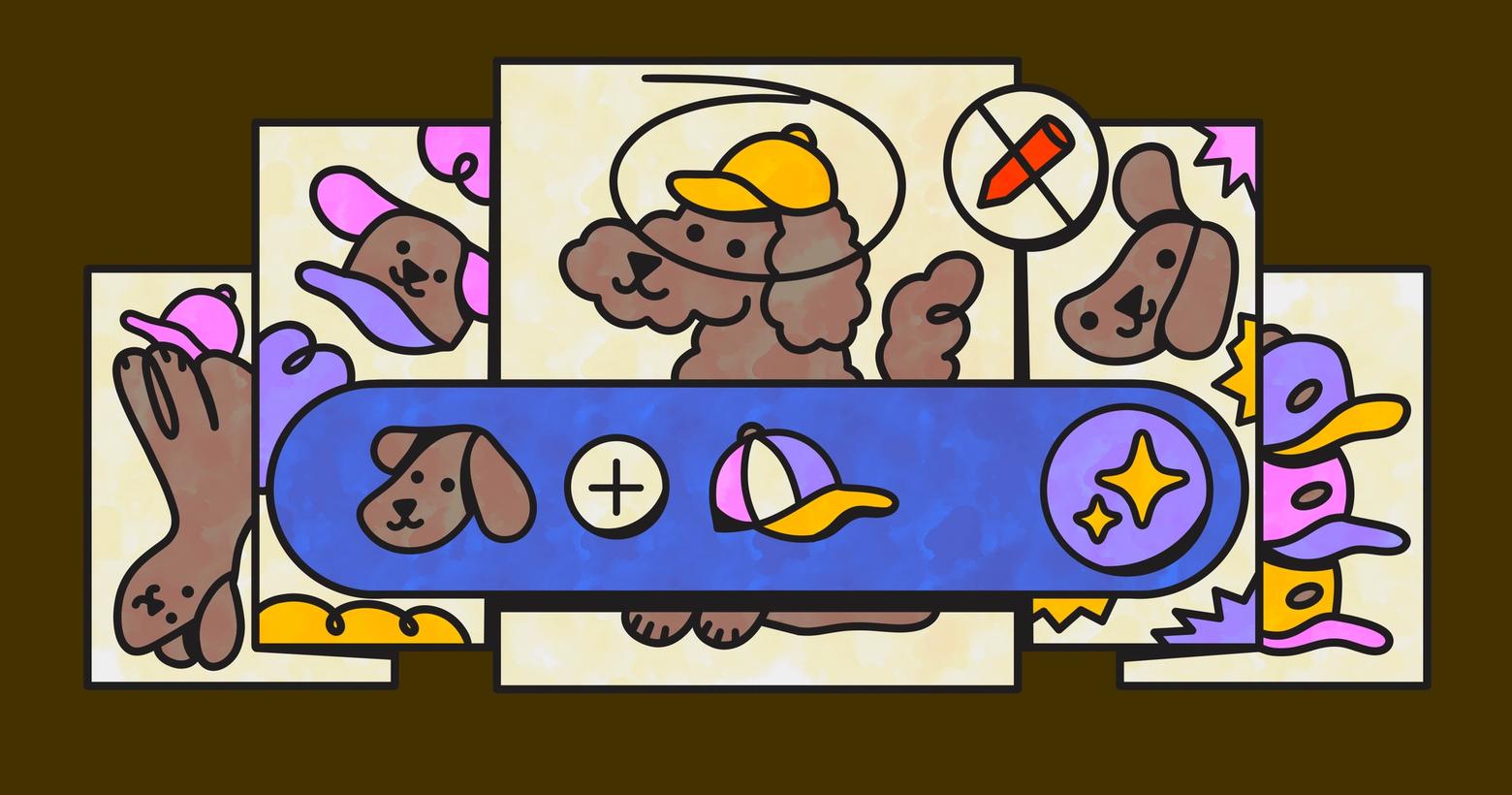

In Caren Cioffi from Agenda Hero’s session on creating AI products that people actually want to use, she told the very relatable story of struggling to get an AI image generator to create the perfect image. The AI created an image that was almost right the first time, but each attempt to fix small issues in the image produced worse and worse results. This is because generating images with AI involves basically stuffing a prompt into a black box and hoping for the best, since the pixels it spits out each time will be different.

The non-determinism of AI—that it creates something a little different each time—is part of the magic of AI. It’s also what makes AI tools so annoying to use. AI tools take the work off our hands, producing a speedy and efficient approximation of what we want from just a few words. But the results are really only as good as how well we prompt it, and even then it lacks the nuanced perspective that comes with human taste. And turning that speedy and efficient approximation of our desires that’s almost right is actually really hard when the tool you’re using is a black box that only accepts natural language prompts. For developers—and users as a whole—it can feel less like we’re wielding the AI tool for our own purposes and more like the AI tool is taking us on a wild-ride through its own creative processes. This is no fun when you’re just trying to generate an image for your mom’s birthday present, as in Cioffi’s case, and quadruply no fun when the AI should be helping you address critical bugs in your codebase.

Cioffi’s solution is to give humans back the agency in their AI tools. One way to do this is to allow users to re-generate small sections of the AI’s output, or (wild idea) just let them edit it. Maybe you don’t want the AI tool to come up with a whole new answer to your question every time or re-generate an entire image when there’s an issue. Instead, actually usable AI will allow you to make small edits as you see fit, directly in the UI.

As AI tool usage becomes more ubiquitous and use cases become more specific, it really is in the best interest of AI developers to consider how easy the tool is to use in practice, not just how fast and solid the logic of the model is. Because it seems users are beginning to ask themselves: is this tool actually making me faster, or giving me more work? When your user is forced to re-generate an output over and over just to fix something that’s a little off, they may begin to ask themselves if it would have been faster to just do it by hand. And in enterprise instances where AI is being asked to automate wide swathes of our work, those things being a little off stack on top of each other, leaving developers with mountains of technical debt. How easy it would be if we could just fix the one or two things that need to be fixed before everything runs out of control. When you give humans the agency to wield the tool as they like, you make it usable. When you make it usable, you make it adoptable.

Context, context, context

As a sort of fun game for myself, I kept a running tally of all of the buzzwords I heard from various sessions I attended. One word that got quite a few tally marks as I traversed the floor of the San Jose convention center was context. Not surprising, if you know anything about the major roadblocks organizations are starting to face in their AI strategies. As you know, AI’s abilities are only as good as its training data, and most training data doesn’t include the particular nuances and needs of a company. AI coding tools without company context, for instance, will generate code that doesn’t include a company’s widely used standardization or architecture, leaving many developers the janitorial job of cleaning up and reorganizing the code. This can be particularly frustrating because the important knowledge that gets projects across the finish line is in the same place it always has been—right there, in developers’ heads.

In order for AI strategies to create the foretold 10x developer out of their current staff, LLMs need the knowledge that employees already have. What this looks like across companies is dependent on the one you ask, but for some it means accessing data through MCP servers, feeding their bots meeting notes, handcrafting and adding in personas, or placing other guardrails into their AIs so they only take actions based off of specifications given to them. Even collaborative design tool Figma is adding context to their AI in the form of user-inputted brand kits and copy specifications. So it seems having access to knowledge and context is paramount for AI tools to be actually useful in our everyday workflows.

In Stack Overflow’s keynote session, our Chief Product and Technology Officer Jody Bailey spoke about context being the gamechanger for AI tools, sort of like a master key for unlocking the full potential of all AI tools. Because each organization has very specific and unique workflows, communication methods, and guardrails, an out-of-the-box AI trained on publicly available data will never bring full realization of efficiency and productivity for developers, no matter how powerful it may be in its factory setting. And how can you trust an AI to help your company if it knows nothing about your company other than what’s available on the wide-open internet?

At the crux of AI’s need for context is that developers don’t seem to trust AI tools. Developers often criticize AI for incorrect answers or mistaken actions, both of which actually cost developers time. Like I said before, much of the “productivity gains” of AI tools end up wasted when developers have to rework slightly incorrect answers or code. And while making things easier to edit may help with usability and adoption, constant reworking is neither a productive nor sustainable AI strategy. Can’t just treat the symptoms, you must find a cure. Context may just be that cure.

As Senior Director of Developer Relations Lena Hall from Akamai succinctly put it in her own session: Context is all you need. In most processes of today, it falls on the human-in-the-loop to check the work of the AI to make sure it adheres to the specificities of their codebase. But instead of forcing stopping points along the way so humans can fix AI’s mistakes, Hall recommended having domain expertise included during logic formation. In her words, this is not an issue with model intelligence, but rather with information design. It’s important enterprises are feeding the necessary industry and company context to the agents beforehand, or otherwise making it available to their tools during the logic process. Our own enterprise product, Stack Internal, uses an MCP server to feed human-validated data to agents, but Hall shared A2A and advanced RAG can also be solutions to the context problem.

Interoperability in agent workflows

During his session on creating enterprise AI frameworks that actually work, IBM's Chief Architect for AI Nazrul Islam reinforced the need for interoperability in agentic systems. To him, building millions of agents isn’t enough. Just like people, agents actually need to work together to get real stuff done. Surprise!

While the current state of AI tools necessitates that us humans stay in the loop, developers and technical teams clearly yearn to pass-off the parts of their jobs that are more taxing than fun. It’s why so many companies are trying to build AI tools that do documentation and code review for us. And it’s not just developers who are frustrated with mundane tasks—everybody in every organization procrastinates. This is an issue in and of itself, but it becomes an even larger problem when it comes to cross-departmental tasks where multiple people across multiple teams don’t want to work on a boring thing. And while agents are able to lighten the load of some of this for us, the burden still falls on the cross-departmental humans to collaborate, communicate, and complete the task. That is, unless our AIs could somehow all work together to get it done instead.

Enter: interoperability. If done right, it can transform a giant mess of AI tools into a working AI strategy. But interoperability is not an easy framework to create, otherwise we would have all done it already. It involves connecting distributed systems across SaaS, public cloud, and on-prem that, in the past, didn’t need connectors because humans could easily access all of them. This kind of involved piping of distributed systems has become one of the main difficulties for leaders who want to automate entire workflows or processes. In a perfect world, these kinds of agentic systems would be like a gold-medal relay baton pass. A sales AI closes a deal and passes the baton to the finance AI. The finance AI creates a forecast and passes the baton to the customer success AI. The customer success AI tracks retention and upselling opportunities and sends that information back to the finance AI, who sends the next quarter’s quota to the sales AI. And on and on it goes.

According to Islam, creating a sort of “agentic team” in an enterprise that actually works means avoiding the same pitfalls that happen in human teams—siloed work, lock-in that keeps team members from exploring new ways of doing things, and use of unstructured workflows that cause context loss.

For companies wanting to do this, Islam advises creating a roadmap for your AI strategy that includes taking inventory of capabilities like APIs and events, normalizing access for models through MCP and A2A, creating governance for interaction that’s observable and auditable, mapping out the cross-system journeys the agents will have to take, and then building your AI teams. With really good interoperability, agents may even be allowed to “discover” each other, creating new pathways to automate work and share information.

It’ll take more than just code to prove your skills

As the resident Gen Z writer for Stack Overflow, I would be remiss if I didn’t discuss one of the things most pertinent to my generation—how can junior developers get jobs in a market saturated with AI code generators? While attending a session by Romanian IT academy Coders Lab, it became ever more clear to me that the original pathways for young professionals to enter the tech industry are no longer walkable. Gone are the days of internships and learning at your entry-level job. To even get their first job, junior devs have to prove they’re more valuable than an AI code generator. To do this, Coders Lab gives junior developers the chance to participate in actual client work, guided by the mentorship of senior developers and engineers leading these projects. Through this work, junior developers are able to showcase their technical skills, improve soft skills like communication and collaboration, and receive mentorship from the senior devs leading their projects.

There were actually plenty of bright-eyed students at DeveloperWeek 2026, attending the DevWeek Hackathon or networking on the expo floor. While these sort of things were always part-and-parcel in getting your first job, it seems of increased import for the youngest generation of tech workers, who seem to realize their physical presence in communities and conversations garner them a visceral distinction from the AI tools that can do similar (and sometimes better) work than them.

DeveloperWeek 2026 provided validation and exploration of many of the conversations already happening in the tech community: that AI tools are pretty good but not good enough, that they need more context to be truly helpful, and that more complex architectures are needed for true automation. All of this means there is still much work to be done for the tech industry to realize AI’s full potential, and that means there is still much need for human developers to do the work. What a relieving takeaway to have from a conference called DeveloperWeek.