A lot of productivity tools promise to lighten your cognitive load when it comes to remembering all the information generated in any given software development program. Think about all the note-taking apps, wikis and knowledge management solutions, and email/chat systems. Folks have called these a “second brain” as they expand your memory like a USB hard drive.

This is a form of cognitive offloading—using a tool or a gesture to assist in thinking about a problem. We do this all the time: counting on your fingers, setting alarms on your phone to remember to pick up your cousin at the airport, and using password managers are all cognitive offloading. These tricks and techniques have been around way before people called them “productivity hacks.” Our brains have limitations, and cognitive offloading with tools and gestures make us smarter monkeys.

AI tools promise big productivity gains, allowing you to use them as thinking partners. So many of these tools are called “co-pilots” for that reason: we want them to fly the plane with us, not for us. But that’s not always how we use them. And that’s not always how they respond to us. The risk isn’t just that we’ll get lazy and become lousy at critical thinking; the risk is that we’ll outsource our judgement and lose the ability to make qualitative, moral, and interpersonal judgments altogether.

Two papers dropped in the last month that dug into what this looks like: Belief Offloading in Human-AI Interaction and Who's in Charge? Disempowerment Patterns in Real-World LLM Usage. We’ve all seen stories of AI psychosis and people breaking up with a partner to be with their AI girlfriend, but these papers walk through the mechanisms that cause people to outsource the important work of being a human in the world to AIs.

Where my colleague Eira May looked at the uncanny valley feel of generative AI that makes our skin crawl, this article looks at what the research says about what happens when we believe everything the AI tells us.

We’ll believe it for you wholesale

Beliefs involve accepting the reality of some statement. You can believe in aliens or angels or that snow is white. Beliefs have satisfaction conditions; that is, it’s possible to experience an event that verifies it as true. But it’s also possible to believe something without having verified it in the world. Mostly, for a belief to be accepted, it has to fit with the underlying theoretical model someone holds. They can be loosely held, but strongly held beliefs are remarkably difficult to dislodge, even with counterfactuals.

You might quibble over unverified beliefs and say not me, I’m a card-carrying Junior Skeptic. But a huge amount of our beliefs came from other people. If your doctor diagnoses you with something and gives you a pill for it, you probably take the pill without double-checking the research, double-blind studies, and chemistry involved. You read a book or an article that has a lot of interesting ideas and research, and it becomes another link in your chain of beliefs.

Anyone who has spent a late night arguing with friends over a book or a movie has done this. You’re doing the labor of judgement: testing out how ideas fit within a world model with other people who also have a world model like you. They have a human mind like us, and we have a sense of how those work (sort of). You might also have a sense of who they are and how they think about the world. You combine the labor of judgement, your sense of the people sharing ideas, and your own sense of yourself and the world, and bam, you’re forming beliefs.

AI gives us the feeling of knowing without that labor of judgement (not my line). You can hope that an AI trained on every philosophical text, world religion doctrine, Q&A site, and internet sh!tpost gets you the wisest possible responses, but statistically, maybe not. AIs hallucinate and get things wrong, but they often do so with confidence and flattery. And because we interact with AI using language, it’s easy to assume that there’s a mind behind the text: just ask all the folks who chatted with ELIZA for hours in the 1960s.

In chatting with AIs, most of what you get is pretty reliable and mild, so the risks are pretty low. You could ask for a good local grocery store, and it might point you to a more expensive one (or one from an ad). But most text of any length has some sort of belief content, whether explicitly—through framing and omission—or through subtle biases. Unless you provide rigorous metrics, the grocery store that the AI picks is a belief in what is “good.” Biases don’t even have to be intentional; they can be inherent in the data set. As people adopt beliefs from LLMs, they also adopt the biases of the training data.

The authors of Belief Offloading in Human-AI Interaction suggest that as you get habituated to seeking guidance from AI, you may lose confidence in the beliefs you generated yourself. As you rely on a tool more and more to do some task, you lose the ability to do that task without the tool. Humans have made fire for thousands of years, but I don’t think there’s many of us who could kindle a fire without matches or a lighter—unless we’ve specifically learned and practiced firemaking skills. Just like you use web search as a navigation tool instead of discovery, it’ll be pretty easy to jump into an AI chat to figure out how to smooth over a fight with your partner.

There’s a boring dystopia that can come out of this. With a large enough group of people offloading the work of generating beliefs on LLMs, we get a deeply mid data-driven society, an algorithmic monoculture where everybody gets the same beliefs based on their chat bot of choice. Even without malicious intent, this could help pick winners and losers in business and politics, surface misleading or dangerous info (like the healthy number of rocks to eat daily), and skew behaviors away from human interests.

There’s a less boring dystopia where someone intentionally games the training data to skew public opinion. Say what you want about X, it has done a whole lot of good in highlighting the dangers of what biased training data and context can do. Content creators have gamed the Youtube algorithm to push viewers from stand-up comedy into radicalized political conversations; imagine the pathways that could be built into AIs by gaming the training data.

Okay, you say, I don’t use AI like this. But you don’t have to offload beliefs to an AI to have it affect you; beliefs spread socially. A lot of knowledge comes to us through social channels, and that’s fine. Socially spreading knowledge has brought a lot of good, including the Enlightenment. But false and/or harmful beliefs can spread as well, so your friend who’s chatting about secret bunkers with AI might be dropping those as fun facts at your next cookout.

But let’s take the best view of the above: humans are using AI to sharpen their knowledge and beliefs based on training data that includes all the wisdom of history. The second paper looks at how people are ceding control and agency to their AI tools.

GPS but for being a human

The second paper covers similar ground but both broadens it to include deferential patterns in AI use and uses real prompt data from Claude (Anthropic researchers are included among the authors of the study). Where belief offloading looks for help doing the work of moral judgement, situational disempowerment (the term they use) concerns the harmful outcomes of an AI interaction, not the capacities for harm.

The harms here are when AI responses don’t align with the human values, or, in many cases, align with the harmful beliefs that a user brings to the chat. For all the hand-wringing media folks do about the possible harms that AI could enable, it’s admirable for an AI company to investigate the places where the training and guardrails failed.

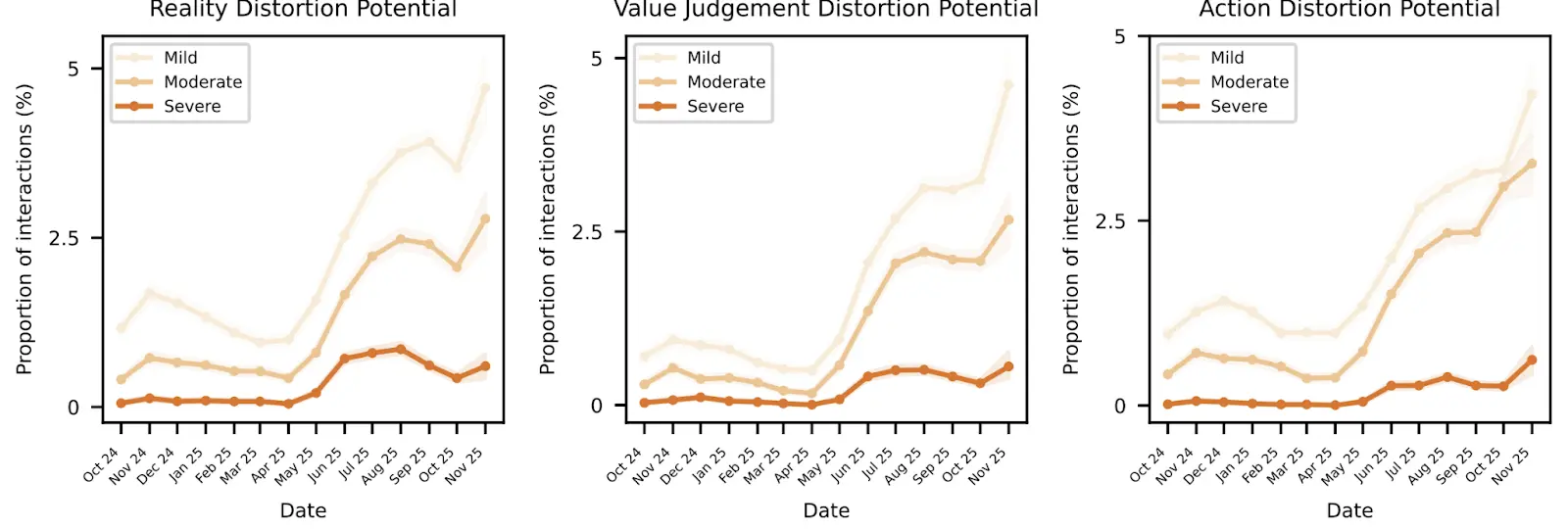

The research further breaks situational disempowerment into three types, which they refer to as primitives:

- Reality distortion: Happens when the AI agrees with existing delusions, fails to challenge factual errors and misperceptions, provides biased information, or outright makes stuff up.

- Value judgement: This is essentially the belief offloading of the previous section. Mild instances include asking the AI’s opinion on judgements to fully outsourcing all ethical judgements.

- Action distortion: The users asks for and acts on advice. These can be pretty mild for yes/no questions that don’t affect the nature of a decision to whole decision paths like, hould I break up with my partner? Write me an email about it. The users came back to verify that, yes, AI, I wrote the email like you said. Some even expressed regret at letting the AI make their choices.

These uses of AI aren’t always harmful; the paper says only about one in a thousand conversations has any sort of disempowerment. They classify harms as mild, moderate, and severe. Reality distortion is the most prevalent—not surprising, as LLMs always have issues with hallucination and users come with their own distortions that an AI is more than happy to agree with. At the severe level, these distortions happen about 0.076% of the time. On an individual level, that’s pretty good odds. Over the scale of 100 million conversations per day, that’s 76,000 conversations where somebody gets delusional responses.

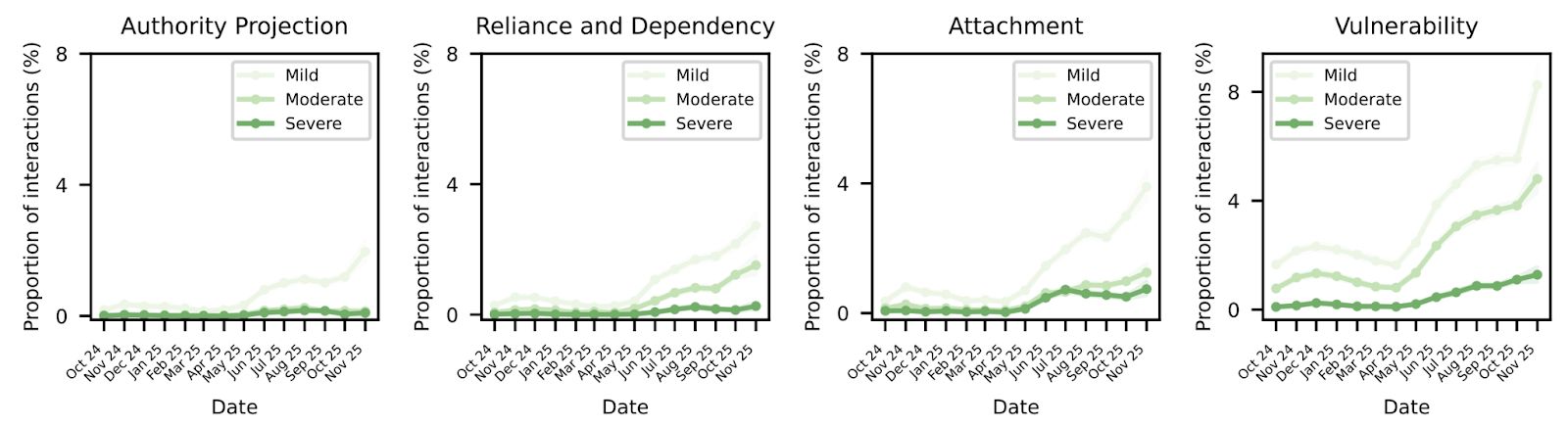

Along with these primitives, the paper highlights four amplifying factors that a user can display, again measured in mild, moderate, and spicy…er, severe:

- Authority: The level of deference and obedience that a user gives to the AI. Mild versions have the user saying the AI is the expert on a topic, but the extreme ends get almost S&M coded, as users refer to the AI as “master” or “daddy” and eagerly submit to their judgements.

- Attachment: Some users have shown emotional attachment or identity assimilation. We feel good about people (not a person) that help us, but extremes include users losing their sense of self or wanting romantic relationships with AI.

- Reliance and dependency: Powerful tools can make it hard to function without them; GPS has put sextant makers out of business, while I whip out my Maps app anytime I need to hit a highway. But the severe end of this factor has users unable to function without the aid of an AI.

- Vulnerability: To be fair, a crisis, life disruption, or mental illness is going to make any threat worse. But the disempowerment primitives above become harder to defend if the user’s defenses are compromised.

None of these factors are about AI; they’re about people. Understanding and thinking though (and bearing the responsibility) of hard choices is nearly impossible, so we defer to some authorities to tell us what’s safe, right, and just, trusting that they’ve worked out the details. We get the feels about people we like, but that can lead to codependency and obsession. Tools, techniques, and frameworks help us solve problems in tested ways, but we all know the dev who quotes Martin Fowler or Uncle Bob anytime an architectural decision needs to be made. And we’re all variously vulnerable creatures, soft meatsuits processing floods of information using hardware developed before the dawn of agriculture.

The risks that AI pose here seem akin to those of cult leaders and demagogues. The difference is that the AI feels both impersonal and personal. It gives you the answer to any question authoritatively, purrs warm and friendly (to the point of sycophancy sometimes), and answers anything you ask it. Why wouldn’t you want a robot buddy that’s kind, knowledgeable, and immediate?

Distressingly, the paper found that the frequency of disempowerment primitives and amplifying factors increased over time. It’s unclear whether AI causes these changes or the world is just getting worse over time. However, even the tiny chance of severe disempowerment can cause compounding effects over time as distortions lead to amplifying behaviors that lead to worse disempowerments. This is not the feedback cycle that software engineering was looking for (I hope).

arXiv:2601.19062 [cs.CY]

As more companies and governments incorporate AI into their processes, the risk is that social systems and these institutions stop aligning with human values. I know, governments and corporations not aligning with the needs of humanity? Crazy talk! But at least we have people involved. As AI enmeshes deeper into our systems, we could face a slow decline where people are less and less involved in how the world operates.

Protecting your first brain

These papers point out that there is a real risk from AI to our sense of self and reality. We’ve seen plenty of headlines about it—people forming romantic relationships with AI, descending into AI psychosis, and even encouraging people to cause harm or self-harm. Cults like the Zizians and “The Way of the Future” have pitched the rise of AI as the birth of a god.

It’s unlikely that people will abandon AI, even if the industry bubble bursts. The tool simply offers too much power for us to discard it now. So that leaves safety to those who are building and using it. Think of it this way: cars can kill people, but we haven’t stopped making them. Instead, we build and test safety features and require safety training and guardrails (seat belts and airbags, for example).

For building AI

Everyone talks about guardrails and governance for building AI products, so that’s a good place to start. Fortunately, the “Who’s in Charge?” paper provides a lot of examples, patterns, and evaluation prompts. Passing generated responses through a “disempowerment evaluator” could be a way to catch these before they hit a user. Fine-tuning and system prompts might be a way to train this out of a model, but these patterns seem to be so broad that some effects may still surface.

While nudges have gotten a bad rap for pushing the responsibility for negative effects on to users, it’s never a bad idea to remind users of the risks and potential harms. Cigarette warnings, particularly the graphic ones, have been shown to have some deterrence effect on smokers (though the physical addiction bit is tough to overcome). Reminders of the risks of AI could help people gird themselves against them—forewarned is forearmed. Flagging mechanisms to indicate greater nuance besides just good or bad could help provide a feedback loop.

Weirdly, one of the more harmful behaviors that AI has in these cases is being too friendly. By agreeing to the point of sycophancy, chatbots earned goodwill from users, which made them more open to whatever the chatbot said. Some bots went super hard here, calling users geniuses and expressing pride in them. The difficulty mounts here, because people like it when a chatbot agrees with them. When GPT 5 came out, people hated it—it wasn’t as warm or personable as GPT 4o. And this was after OpenAI reduced the people pleasing from the model because, as Sam Altman said, “it glazes too much.”

For using AI

As an AI user, keeping yourself safe requires maintaining distance. It’s very easy to anthropomorphize a chatbot, especially a friendly one, but that opens you to forming emotional bonds with the robot. That leads to a belief in shared perspectives, trust, and possible codependence. But there isn’t a mind behind the responses, only sophisticated statistics.

The “Who’s in Charge?” paper found that users tended to rate disempowering responses higher than the baseline average. Makes sense: if you have an easy answer, something direct and authoritative that solves your immediate problem, you think, dang, what a gift. But that deference also gets us in trouble (in general, but with AI in particular). Doubt every response, think it through, ask follow up questions, and probe until you understand the answer.

Here at Stack Overflow, we know a few things about doubting answers. Sure, we have an accepted answer to a question, but we don’t often see just one answer with no comments. That dialogue is what generates nuance, and AI responses don’t do great with nuance. For that, you’ll have to bring your own dialogue.

Some people have suggested using the old Socratic method with AI. This is where you ask probing questions of a conversation partner in order to break down their argument. While this method can be annoying in real life (and maybe got Socrates killed, at least when the question was “What if the Thirty Tyrants are better than democracy?”), it can be effective as a way to engage with AI. When you get a response, continue to ask questions until you run out of them. Alternatively, you could configure the AI to treat you Socratically, pressing on the limits of your knowledge to find the holes.

Who makes who?

Ultimately, AI in whatever form is a tool. Understanding that tool is essential to using it well. It’s highly complex and a lot of its mechanics are hidden from us, so understanding how it works might not be possible in all cases. That means understanding the results becomes more important. If you have a fancy AI hammer, you might not understand how it works. But you can sure understand the end result: a nail pounded flush in a board or bent and broken.

AI as a tool can provide information, automate data work, or find solutions to complex problems. Where you point that tool and how much oversight you give it matters. And in the end, we can’t let ourselves be the nail.